Anthropic has released ten ready-to-run finance agent templates for Claude.

That sounds like a set of workflow examples. It is more important than that.

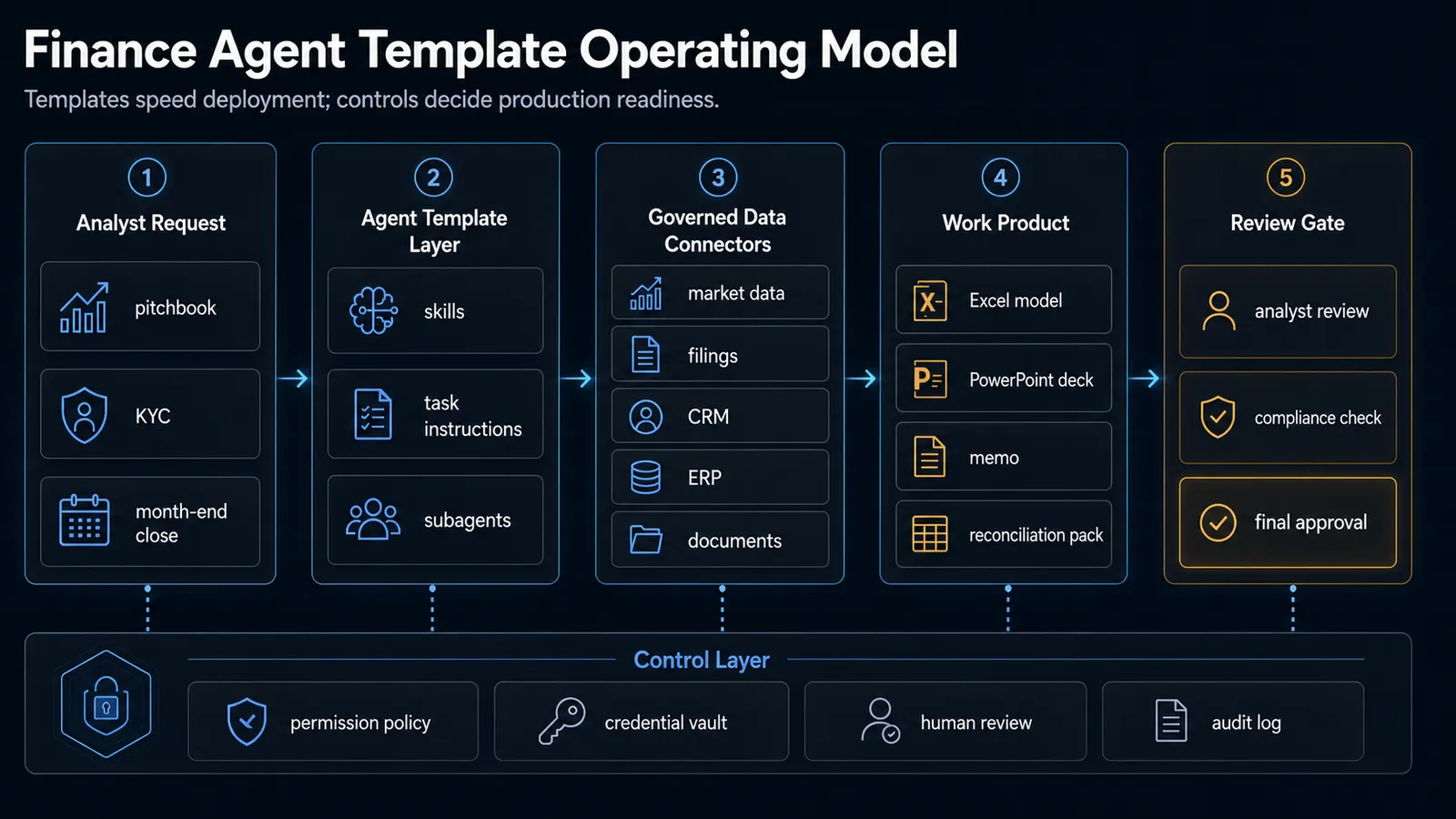

The launch shows where enterprise AI agents are heading next: away from generic chatbots and toward vertical workflow bundles that combine instructions, connectors, domain-specific skills, subagents, office-document output, and human approval.

For financial services, that matters because the work is not just “ask a model a question.” A real analyst workflow may involve SEC filings, market data, internal decks, spreadsheets, emails, CRM records, diligence documents, KYC files, journal entries, and review sign-offs. The agent has to work across all of that without pretending it can replace professional judgment.

This post is not a Claude fan post. The useful takeaway is broader: if agent products are going to survive inside regulated teams, they need to ship as task-specific operating systems, not empty model windows.

TL;DR

- Anthropic announced ten finance-focused agent templates on May 5, 2026, covering work such as pitchbooks, KYC screening, earnings review, financial modeling, market research, valuation review, general-ledger reconciliation, month-end close, statement audit, and meeting preparation.

- The templates are available through Claude Cowork and Claude Code plugins, and as cookbooks for Claude Managed Agents.

- The real product pattern is the package: skills, connectors, subagents, office add-ins, credential handling, permissions, audit logs, and review checkpoints.

- This is different from a generic “AI analyst” prompt because the workflow produces concrete artifacts such as Excel models, PowerPoint decks, memos, reconciliations, and review packages.

- The adoption risk is also clear: finance teams should treat these agents as drafting and analysis systems, not as autonomous investment, accounting, legal, compliance, or onboarding authorities.

What Anthropic announced

Anthropic’s announcement introduces ten templates aimed at common financial-services workflows. The company groups them across research, client coverage, finance operations, and onboarding-style work.

The list includes:

- Pitch builder

- Meeting preparer

- Earnings reviewer

- Model builder

- Market researcher

- Valuation reviewer

- General ledger reconciler

- Month-end closer

- Statement auditor

- KYC screener

The templates can run as plugins in Claude Cowork or Claude Code, or be deployed through Claude Managed Agents. Anthropic also points developers to a public financial-services repository containing plugins, skills, connector references, and managed-agent cookbooks.

The important wording is “template.” These are not supposed to be magic one-click employees. They are reference architectures a firm can adapt to its own data providers, modeling standards, review policies, and compliance requirements.

That distinction is good. In finance, a generic model answer is not enough. A useful system has to know the difference between a draft pitchbook, a reviewed model, a signable memo, and a task that must be escalated.

Why this matters

Most AI productivity tools still ask the user to do the workflow design.

The user writes the prompt. The user uploads files. The user explains the context. The user checks the output. The user moves the work into Excel, PowerPoint, Word, Outlook, a data room, or a CRM. The model helps, but the user remains the integration layer.

Vertical agent templates invert that pattern.

Instead of asking every analyst to invent a repeatable prompt for comparable-company analysis, the template can package the process:

- which inputs are required

- which data sources should be queried

- which calculations matter

- which subtask can be delegated

- which artifact should be produced

- which review step must happen before delivery

- which logs need to exist for oversight

That is why this launch is worth watching. The value is not only model intelligence. The value is repeatable workflow design.

This connects directly to the broader agent trend we covered in Why MCP Is Becoming the Default Standard for AI Tools in 2026. Agents become more useful when they can access tools and data through governed interfaces. They become more dangerous when those interfaces are vague.

The finance-agent operating model

A production-ready finance agent is not one model call. It is a workflow system.

The shape looks like this:

analyst request

-> finance agent template

-> governed data connectors

-> spreadsheet, deck, memo, or review packet

-> human review and approval

-> audit trailThe strongest part of Anthropic’s announcement is that the templates are described as bundles of skills, connectors, and subagents. That is the correct abstraction.

Skills encode repeatable domain knowledge and steps. Connectors give the agent controlled access to data. Subagents isolate subtasks such as methodology checks, comparable selection, or evidence gathering.

The control layer matters just as much as the agent layer. A finance workflow needs permission policy, credential boundaries, human review, and audit logs before teams should trust it with real client, compliance, or reporting work.

The Microsoft 365 angle

Anthropic also emphasized Claude’s work across Excel, PowerPoint, Word, and Outlook.

That matters because finance work is unusually document-native. Analysts do not only want a text answer in a chat window. They need an updated model, a formatted deck, a memo, a reviewed statement package, or an email draft that carries the same context as the spreadsheet.

This is where vertical agents become practical.

If an agent can draft a market memo but cannot update the model or deck, it is useful but incomplete. If it can analyze filings, update Excel, draft PowerPoint, and prepare an Outlook note while preserving context, it starts to fit the way the work actually moves.

The caveat is obvious: office output still needs review. A model that writes a formula, updates a slide, or summarizes a filing can still make mistakes. The difference is that the review target becomes a real artifact rather than a floating chat answer.

Why benchmarks are not the whole story

Anthropic cites Claude Opus 4.7 leading Vals AI’s Finance Agent benchmark. Vals describes Finance Agent v1.1 as a benchmark for financial analyst tasks, including SEC filing research and tool use.

That is relevant, but it should not be overread.

Benchmarks can tell us whether a model is improving on a standardized task. They do not prove that a firm can safely deploy an agent into its real workflows. Production quality depends on data access, task decomposition, review behavior, source traceability, cost, latency, permissions, and the firm’s tolerance for error.

The more useful benchmark question for teams is not “Which model scored highest?” It is:

Can this agent produce a reviewable artifact with enough evidence, structure, and logging that a qualified professional can trust the workflow?

That is a higher bar.

Decision matrix: where finance agents fit first

| Workflow | Starting posture |

|---|---|

| Public-company filing research | Good early use case if citations and source trails are strong |

| Comparable-company analysis | Useful with analyst review and firm-specific methodology |

| Pitchbook first drafts | Useful for assembly, formatting, and initial narrative; not final client material |

| Meeting preparation | Strong use case when CRM and document access are governed |

| Earnings review | Useful for triage and model-update suggestions; analyst must verify assumptions |

| KYC file preparation | Useful for document assembly and gap detection; compliance review is mandatory |

| General-ledger reconciliation | Useful for break detection and routing; posting decisions need controls |

| Month-end close | Start with checklist support and variance commentary, not autonomous close authority |

| Investment recommendations | Do not automate as final output without professional, policy, and regulatory review |

The pattern is simple: start where the agent can gather, draft, reconcile, or package work. Be much more careful where the agent could approve, file, transact, advise, or bind risk.

What builders should copy

Even if you do not work in finance, this launch is a useful blueprint for agent product design.

1. Ship workflows, not prompts

A template should contain a repeatable process, not just a clever instruction. Users should understand what the agent needs, what it will produce, and where review happens.

2. Treat connectors as production infrastructure

Data connectors decide what the agent can see. They need authorization, logging, source labels, and revocation. This is especially important when MCP servers and partner-built tools enter the workflow.

3. Separate drafting from approval

The agent can draft a model, memo, deck, reconciliation packet, or diligence summary. The system should make it clear when the output is staged for review rather than final.

4. Make artifacts first-class

The value of a finance agent is not just a good answer. It is a usable .xlsx, .pptx, memo, checklist, or audit package that fits into the existing team workflow.

5. Log the agent path

If the agent used filings, market data, CRM notes, expert transcripts, or internal documents, the output should preserve enough provenance for a reviewer to retrace the path.

Common mistakes

Calling it automation when it is really drafting

This is the easiest way to overpromise. Many finance-agent workflows should be framed as assisted drafting, research, reconciliation, and review preparation, not autonomous execution.

Ignoring internal methodology

Every firm has its own modeling assumptions, deck standards, escalation rules, and compliance policies. A template is useful only after it has been tuned to those conventions.

Letting connectors become invisible

If the agent pulls from multiple data sources, the output should not hide that. Reviewers need to know where the numbers and claims came from.

Skipping the control layer

Credential vaults, permission policies, and audit logs are not enterprise decoration. They are the difference between a pilot and something a risk team can discuss seriously.

Measuring only time saved

Time saved matters, but error reduction, review quality, compliance traceability, and rework reduction are better measures for regulated workflows.

How this fits with the bigger agent stack

This announcement sits beside several trends we have already covered on Open-TechStack.

AWS MCP Server Is GA showed that cloud-agent access needs identity, audit, and policy. AgentCore Payments showed that economic actions need budgets and approvals. AI Agent Browser Security Checklist showed that public websites need to distinguish useful agents from abusive automation.

Anthropic’s finance templates add another layer: industry-specific agent packaging.

The next competitive frontier may not be who has the most general assistant. It may be who ships the best workflow bundle for a specific job, backed by the right data connectors and the right control model.

Bottom line

Anthropic’s finance-agent templates are not important because they make a flashy claim about replacing analysts.

They are important because they show a more realistic path for enterprise agents: package the workflow, connect the data, produce a reviewable artifact, and keep humans in the approval loop.

That is less dramatic than “AI banker.” It is also far more credible.

If you are building agents for any serious domain, the lesson is clear: the model is only one layer. The product is the workflow around it.