Real-World Finance Document QA with Local LLMs is a high-impact topic because repository choice determines your long-term velocity, not just your first prototype. In RAG systems, weak repo selection often causes hidden operational costs: brittle ingestion flows, poor observability, weak evaluation, and difficult upgrades. Strong repository choices, on the other hand, compress delivery time and reduce failure rates across the entire lifecycle.

This guide focuses on free and open-source repositories and gives you a practical path from shortlist to production. The goal is not to copy a trend list; it is to choose tools that match your architecture constraints, team skill level, and reliability requirements.

What to evaluate before selecting repos

Use these criteria when shortlisting repositories for RAG pipelines:

- Pipeline coverage: ingestion, chunking, retrieval, generation, and evaluation support.

- Operational readiness: logging, retries, error handling, and maintainability.

- Ecosystem health: release activity, issue responsiveness, and community adoption.

- Interoperability: compatibility with your vector DB, model serving layer, and orchestration stack.

- Governance fit: license clarity, security posture, and reproducible deployment patterns.

If a repo scores high in demos but low in operations, treat it as experimental and isolate it from critical workloads.

Best GitHub repositories to start with

| # | Repository | Why it belongs in a RAG stack |

|---|---|---|

| 1 | kennethleungty/Finance-LLMs | This repository curates real-world LLM implementations in finance, showcasing enterprise platforms, specialized models, and integrated solutions deployed by leading financi |

| 2 | langchain-ai/langchain | Framework for building RAG chains with retrievers, tool calling, and memory patterns. |

| 3 | run-llama/llama_index | Data framework for ingestion, indexing, retrieval orchestration, and agentic RAG flows. |

| 4 | deepset-ai/haystack | Production-ready RAG pipelines with retrievers, generators, and evaluation components. |

| 5 | stanfordnlp/dspy | Declarative framework for optimizing prompting/programs in retrieval-augmented systems. |

| 6 | explodinggradients/ragas | Evaluation toolkit for measuring faithfulness, relevance, and RAG answer quality. |

These repositories are complementary rather than mutually exclusive. Many strong stacks combine an orchestration framework, an evaluation toolkit, and a gateway/routing layer for model resilience.

Reference architecture for a repository-first RAG stack

A resilient architecture usually follows this flow:

- Ingestion layer: loaders + normalization + document versioning.

- Indexing layer: chunking strategy + embeddings + vector storage.

- Retrieval layer: hybrid retrieval, reranking, context assembly.

- Generation layer: model routing, fallback policy, output constraints.

- Evaluation layer: faithfulness/relevance metrics and regression checks.

Keep each layer modular. The easiest way to avoid lock-in is to define clear interfaces between retrieval, generation, and evaluation.

Implementation plan (from zero to reliable)

Step 1 — Build a minimal vertical slice

Pick one real workflow (for example, policy Q&A, repository assistant, or support triage). Build a vertical slice that goes end-to-end from ingestion to answer output. Avoid multi-domain scope in the first week.

Step 2 — Add observability early

Add request tracing, prompt/context logs, and retrieval diagnostics before broad rollout. If a team cannot explain why a bad answer happened, improvement loops become guesswork.

Step 3 — Add evaluation gates

Use automated checks for retrieval relevance and answer faithfulness. A lightweight evaluation gate before deployment prevents silent quality drift.

Step 4 — Introduce fallback routing

Add at least one fallback model/provider path so transient outages do not stop your pipeline. Route failures gracefully and monitor fallback frequency as a quality signal.

Step 5 — Harden operations

Define runbooks for reindexing, schema migration, rollback, and incident response. Most production incidents are operational, not theoretical-model failures.

Common mistakes and how to avoid them

- Choosing by stars alone: stars indicate popularity, not operational fitness.

- Skipping evaluation: without metrics, quality regressions are discovered too late.

- Over-indexing context: larger context windows do not fix weak retrieval.

- Ignoring versioning: unversioned embeddings and indexes break reproducibility.

- Treating costs per token as total cost: include engineering and incident overhead.

30-day production readiness checklist

- Define baseline KPIs: task completion rate, first-pass quality, and cost per resolved task.

- Verify at least one fallback route and one rollback strategy.

- Stress-test malformed data, empty retrieval results, and long-context edge cases.

- Confirm logging and access controls for compliance-sensitive data.

- Lock dependency versions and document upgrade strategy.

Bottom line

The best repositories for RAG pipelines in 2026 are the ones your team can operate confidently under real constraints. Start with open-source building blocks that prioritize observability and evaluation, then scale in measured phases. A disciplined repository strategy delivers better quality, lower operating risk, and faster iteration than chasing one-size-fits-all stacks.

Sources

- Best LLMs for Financial Analysis: A Guide for BFSIs - Neurons Lab — An in-house approach can work for low-risk, standalone tasks, such as drafting internal content or summarizing documents, where you don’t need to train or fine-tune a model on your

- FinanceQA: A Benchmark for Evaluating Financial Analysis … — FinanceQA is a testing suite that evaluates LLMs’ performance on complex numerical financial analysis · tasks that mirror real-world investment work.

- LLMs for Financial Document Analysis: SEC Filings & Decks | IntuitionLabs — We present detailed data on LLM performance in financial QA and summarization tasks, including figures from recent studies and pilot projects. For instance, finance-specific LLMs l

- GitHub - kennethleungty/Finance-LLMs: Comprehensive Compilation of Real-World LLM Implementation in Financial Services · GitHub — This repository curates real-world LLM implementations in finance, showcasing enterprise platforms, specialized models, and integrated solutions deployed by leading financi

- RAG for Finance: Automating Document Analysis with LLMs — We will test two approaches: (1) directly questioning the vector database to extract specific details and (2) performing an automated extraction across multiple companies f

- A Survey of Large Language Models for Financial Applications: Progress, Prospects and Challenges — Leippold [156] demonstrates the susceptibility of financial sentiment analysis to adversarial attacks using GPT-3, highlighting the need for LLMs to ensure the reliability of AI in

Related posts

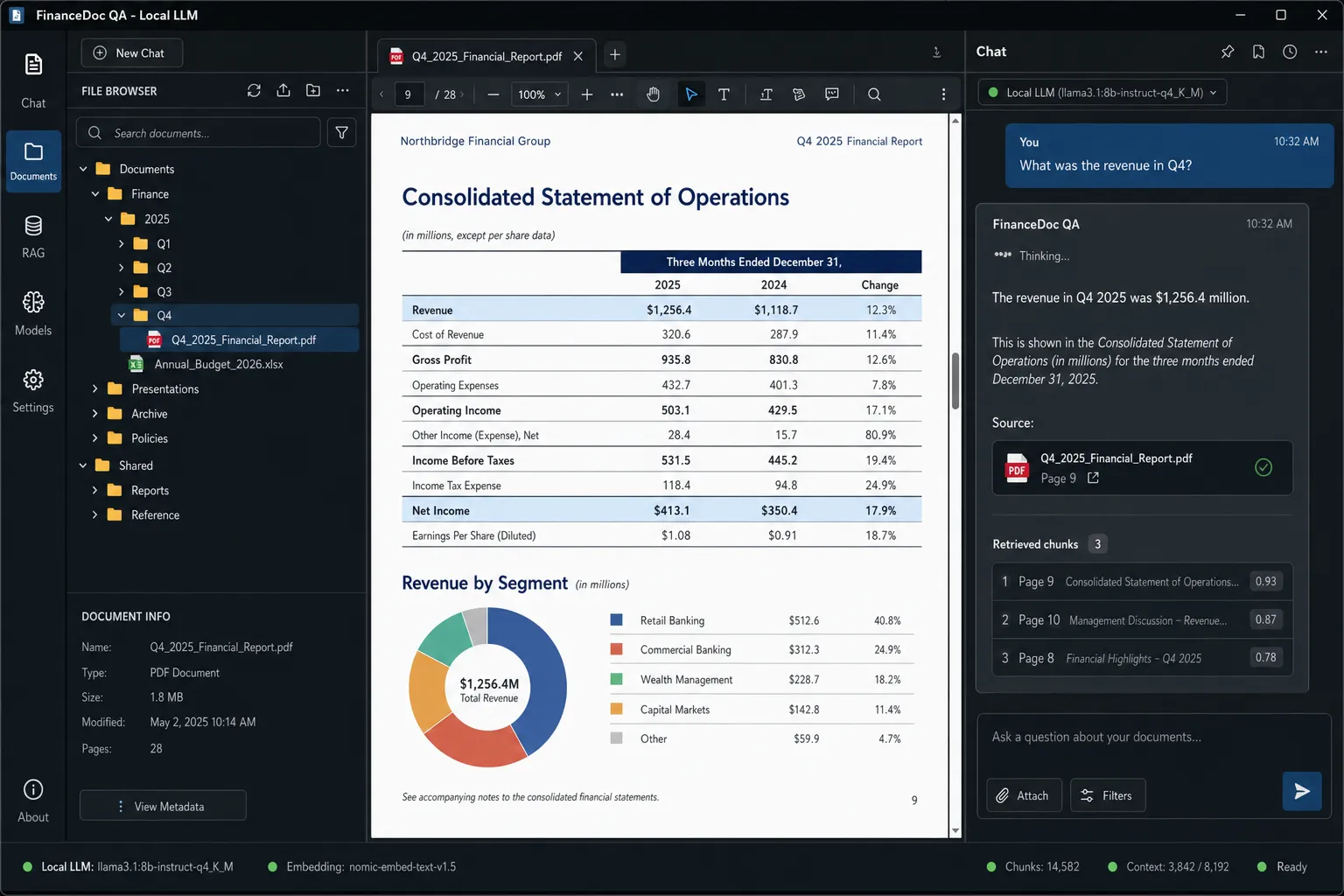

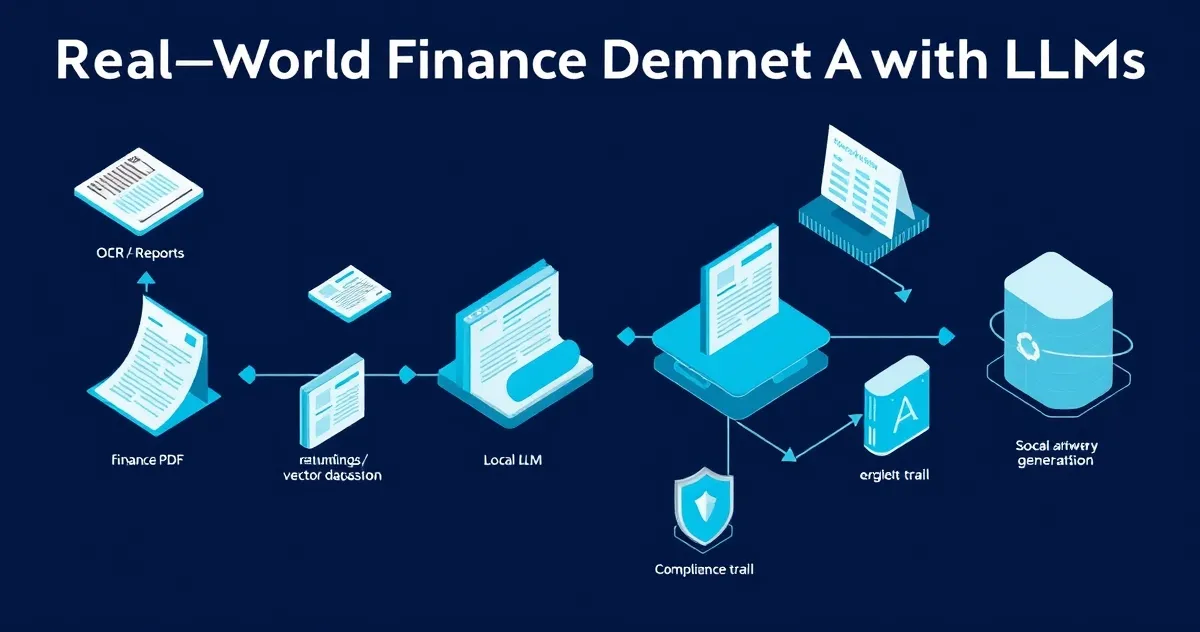

Screenshots