The AI compute race has a quiet failure mode that doesn’t show up in benchmark charts: your infrastructure plan can be “correct” and still become wrong.

On March 28, 2026, the Associated Press reported that Microsoft is taking over a major AI data center expansion in Abilene, Texas after OpenAI backed away from it. The site is tied to the “Stargate” infrastructure narrative (OpenAI + Oracle + partners), and the specific development partner cited is Crusoe. (AP)

Earlier, on March 12, 2026, Data Center Dynamics reported that Microsoft was in talks to take over a planned expansion at the same Abilene “Stargate” campus — a useful reminder that these deals often move long before they become official. (Data Center Dynamics)

This is not just a story about who leases which building. It’s a story about forecasting: the uncomfortable reality that even the richest AI orgs are constantly renegotiating what they actually need, when they need it, and who should carry the risk.

What happened (and why it’s messy)

The Abilene campus sits inside a wider “build big, build fast” pitch for U.S. AI infrastructure.

OpenAI’s own January 21, 2025 announcement for the Stargate Project positioned the buildout as starting in Texas and described a broader plan to deploy massive new compute capacity, with major partners named across finance, cloud, and silicon. (OpenAI)

But the March 28 AP report says OpenAI stepped away from a Texas expansion and Microsoft moved in to take it over.

Around this same Abilene story, coverage has been inconsistent on what is “canceled” versus what is simply being redirected. In March 2026, the Financial Times reported that Oracle and OpenAI had scrapped an Abilene expansion plan (while still pointing to a broader multi-site agreement). (Financial Times)

Oracle, for its part, has publicly pushed back on reporting that framed Stargate as being shut down, calling some coverage “false and incorrect” and saying the Abilene campus work with Crusoe remains on schedule. (Tom’s Hardware)

Read that contradiction as the point:

- hyperscale projects are modular (buildings, substations, networking phases)

- financing and demand forecasting are negotiated in chunks

- “canceled” often means “not at this site, not with this tenant, not under those terms”

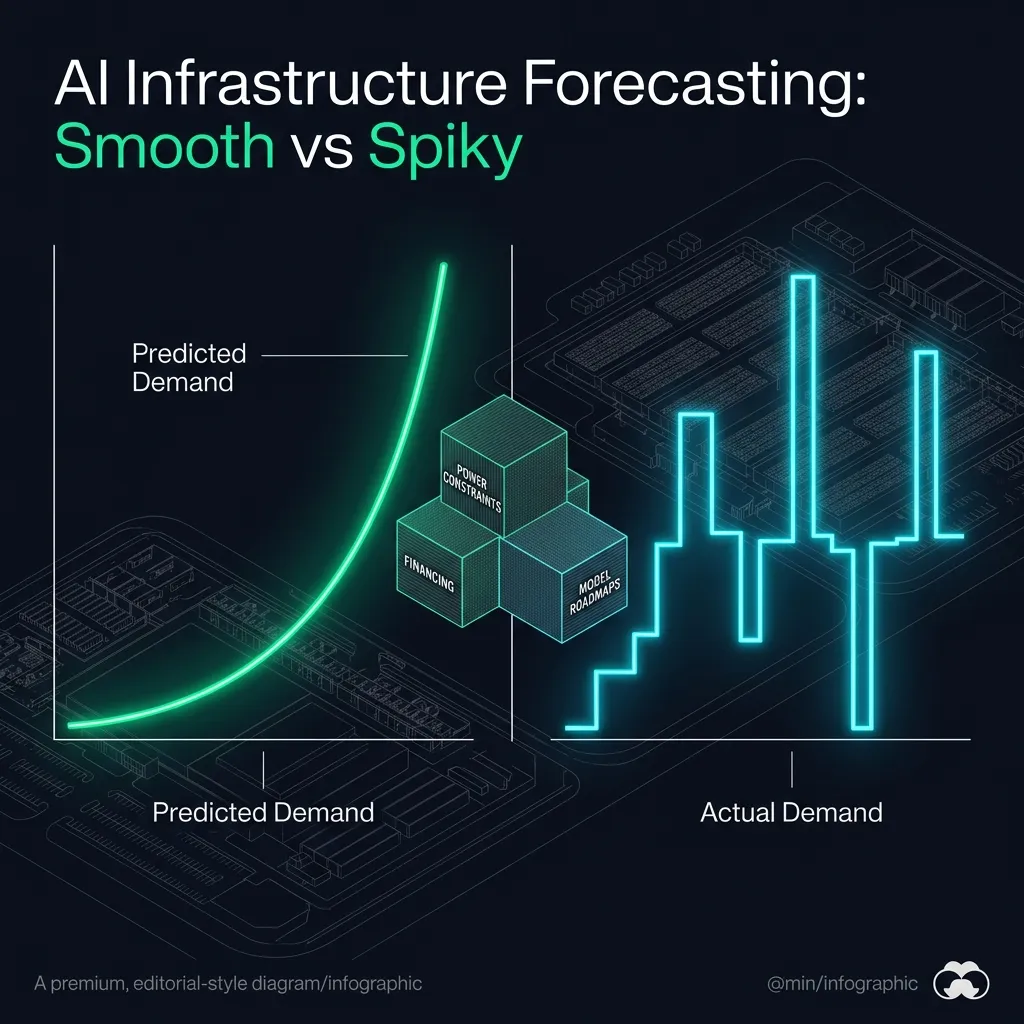

The real signal: AI infra demand is spiky, not smooth

AI product roadmaps don’t turn into stable capacity curves. They turn into step functions:

- a model training schedule moves, and you suddenly need 3–6 months less capacity than planned

- an inference product takes off, and your “training campus” becomes the wrong investment

- a hardware generation slips or arrives early, and the power density assumptions change

If you’re a model lab, the default temptation is to solve this by overbuilding. But power and capital markets are increasingly forcing the opposite behavior: only build what you can justify to a counterparty.

And the counterparty has changed. The marginal “AI build” is no longer a simple cloud order. It is land, power, transformers, gas contracts, interconnect, and a multi-year construction queue.

That’s why this Abilene handoff matters: it’s a concrete example of how the compute race is moving from “who has the best model” toward “who can absorb the planning risk.”

Why Microsoft stepping in is strategically logical

Microsoft is in a position that’s structurally different from a pure model lab:

- it can repurpose capacity across many enterprise tenants

- it can amortize risk across a broader cloud portfolio

- it can treat “AI demand uncertainty” as a feature, not a bug

If OpenAI’s needs shifted (timing, location, or structure), Microsoft is the obvious buyer of last resort because it can still monetize the footprint — even if the exact workload changes.

This also fits the theme we’ve been tracking across the compute stack: the infrastructure layer is where platform power accumulates, especially when geopolitics and supply constraints increase friction. (Related: The Weak Link in AI Chip Export Controls Is the Server Supply Chain)

A subtle read: “independence” is expensive

In 2026, “independent” AI labs keep talking like they can detach from hyperscalers.

Sometimes they can — but only by becoming something hyperscaler-like themselves: capital-heavy, power-heavy, politically entangled, and operationally boring.

When a lab steps back from a campus-scale build, it doesn’t always mean “we don’t need compute.” It can mean:

- we don’t want to carry the balance-sheet risk right now

- we’d rather pay a premium for flexibility

- we’d rather spread workloads across multiple partners and geographies

That’s why big infrastructure announcements should be read as options, not commitments. The option has value even if the exact first site changes.

What to watch next (if you build or buy compute)

Three practical things are worth tracking after this Abilene shift:

1. Who ends up controlling the “overflow capacity” market

If campus builds keep getting re-tenant’ed midstream, the winners will be the actors who can rapidly reallocate capacity:

- hyperscalers

- data center operators with deep financing

- vertically-integrated “compute utilities”

This is the same logic behind extreme proposals like private mega-fabs and dedicated compute cities. (Related: Terafab: Musk’s AI Chip Factory Plan Shows How Extreme the Compute Race Has Become)

2. Power becomes the bottleneck, not GPUs

Once you’re at campus scale, “GPU availability” stops being the headline constraint. Interconnect and power provisioning start to dominate.

If you want to understand future AI competition, watch regional power politics as closely as CUDA roadmaps.

3. “Stargate” should be evaluated as a portfolio, not a campus

Whether Abilene expands under Microsoft, OpenAI, Oracle, or some mix, the larger question is simpler:

Where does the next multi-gigawatt footprint actually get built, and who is willing to sign for it?

Because in the end, model strategy is constrained by infrastructure reality — and infrastructure reality is constrained by financing and power.

Bottom line

The Abilene handoff is not a betrayal of the compute race narrative. It’s the most honest version of it.

In 2026, the most important competitive advantage may not be a single model release. It may be who can plan, finance, and redeploy compute faster than the roadmap changes.