DeepSeek-V4 Preview is the kind of model release that changes the evaluation checklist for open-weight AI systems. The headline is not only that DeepSeek is publishing a new generation of models; it is that the preview pairs Mixture-of-Experts scale with a native one-million-token context window and two clearly separated operating modes: a larger Pro model for frontier-style reasoning and a smaller Flash model for cost-sensitive deployment.

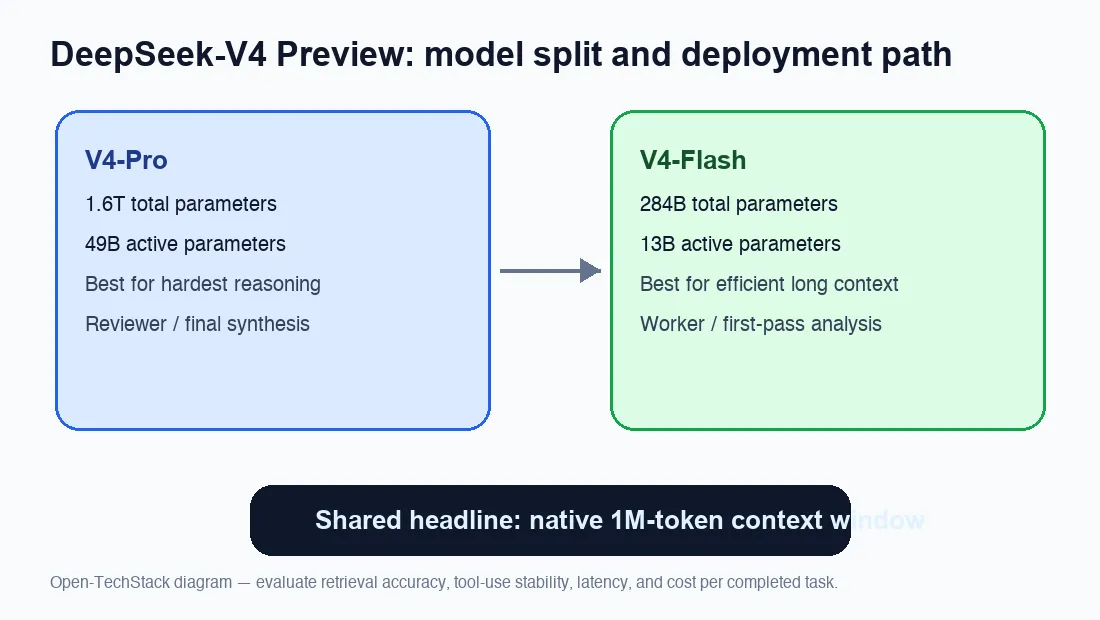

The official announcement describes DeepSeek-V4-Pro as a 1.6T-parameter MoE model with 49B active parameters, and DeepSeek-V4-Flash as a 284B-parameter MoE model with 13B active parameters. Both are positioned around a 1M-token context window, which makes the release especially relevant for coding agents, research assistants, legal-document workflows, long-form analysis, and retrieval-heavy enterprise systems where context size often becomes the practical bottleneck.

What DeepSeek announced

DeepSeek’s public announcement says the V4 Preview is live and open-sourced. The important part is the product split. V4-Pro is the capability-first model: larger total parameter count, higher active parameter budget, and a target profile that competes with top closed-source models. V4-Flash is the efficiency-first model: much smaller total and active parameter counts, while retaining the same long-context headline.

That split matters because it avoids a common problem in model releases: one model trying to serve every workload. Teams that need maximum reasoning quality can start with Pro. Teams that need fast iteration, lower inference cost, or a larger number of concurrent users can evaluate Flash. If the routing, context handling, and quantization ecosystem mature quickly, V4-Flash may become the more practical day-to-day option even when V4-Pro gets most of the benchmark attention.

Why the one-million-token context matters

A million-token window is not just a bigger prompt box. It changes the architecture of applications. A coding agent can keep an entire repository map, issue history, terminal logs, test failures, and documentation in view for longer. A research workflow can ingest multiple papers, background notes, and source documents without constant truncation. A compliance or accreditation workflow can compare large manuals, memoranda, evidence inventories, and checklists inside a single reasoning session.

The catch is that long context only matters when the model can use it reliably. Many long-context models technically accept large inputs but degrade when asked to retrieve small details, follow long chains of dependencies, or maintain state over tool calls. That is why the V4 Preview should be evaluated on retrieval accuracy, agent trajectory stability, latency, and cost per useful answer—not just maximum context length.

Model split: V4-Pro vs V4-Flash

| Model | Total parameters | Active parameters | Primary role | Context window |

|---|---|---|---|---|

| DeepSeek-V4-Pro | 1.6T | 49B | High-capability reasoning, agentic tasks, complex analysis | 1M tokens |

| DeepSeek-V4-Flash | 284B | 13B | Faster and cheaper long-context inference | 1M tokens |

This table is the key takeaway. DeepSeek is using sparse activation to separate total capacity from per-token compute. A 1.6T total-parameter model does not activate all parameters for every token; only a subset participates. That is the MoE bargain: large stored knowledge and specialization, but lower active compute than a dense model of similar total scale.

For builders, this means the real benchmark is workload-specific. A customer-support system with long history may prefer Flash if latency and cost are predictable. A codebase migration agent may prefer Pro if it needs deeper reasoning over thousands of files and logs. A document-analysis pipeline may test both: Flash for broad first-pass extraction and Pro for final synthesis.

Open weights raise the stakes

The open-weight framing is strategically important. Open models let infrastructure teams inspect, host, quantize, route, and fine-tune more freely than closed APIs. Even when a closed model remains stronger in some tasks, an open-weight model can win on data control, deployment flexibility, regional compliance, and cost governance.

For Open-TechStack readers, this is where DeepSeek-V4 becomes more than launch news. If the weights and technical report are broadly accessible, the ecosystem can quickly build deployment recipes, quantization experiments, vLLM serving guides, benchmark dashboards, and agent frameworks around the model. That makes V4 relevant not only as a model to try, but as a platform layer for open AI infrastructure.

What to test before using it in production

Do not treat the headline context size as a production guarantee. Before using V4 in a real workflow, test five things:

- Needle-in-a-haystack retrieval: can the model recover exact details from the middle and end of a very long context?

- Tool-use stability: can it keep state across dozens or hundreds of agent steps?

- Cost per completed task: does Flash deliver enough quality to replace Pro for routine jobs?

- Latency under long prompts: does the system remain usable when context grows beyond normal RAG chunks?

- Safety and data controls: can your deployment enforce logging, access, and retention policies?

These tests are more valuable than a generic leaderboard because long-context systems often fail in application-specific ways. A model can score well on benchmarks but still struggle with a messy repository, repeated tool outputs, duplicated evidence files, or contradictory source documents.

Infrastructure implications

V4-Pro’s 49B active-parameter profile suggests serious serving requirements even with sparse activation. Operators should expect attention memory, KV-cache management, batching, and routing to be the real constraints. The 1M-token window also shifts pressure from model weights alone to context handling. For production, the question becomes: how much useful context should you include, and when should you compress, retrieve, or summarize instead?

V4-Flash is the more interesting deployment candidate for many teams because it keeps the same context headline with a smaller active footprint. If Flash is good enough for drafting, triage, extraction, and first-pass agent actions, Pro can be reserved for the harder final reasoning steps. That two-tier pattern is likely to become common: cheap long-context workers feeding a stronger reviewer model.

If you are already exploring local or self-hosted inference, pair this release with our vLLM production inference guide and the ChatGPT vs Claude vs Gemini comparison to frame what matters: latency, routing, observability, context discipline, and real task success.

Bottom line

DeepSeek-V4 Preview is significant because it combines three forces that usually arrive separately: open weights, MoE scale, and a million-token context window. V4-Pro is the capability statement. V4-Flash is the practical deployment bet. Together, they push open AI infrastructure toward longer-running agents, larger document workflows, and more flexible model routing.

The right next step is not blind adoption. It is structured evaluation. Test the Pro and Flash variants against your own long-context workloads, measure cost per completed task, and inspect how reliably each model uses information buried deep in context. If the technical claims hold up in real deployments, DeepSeek-V4 could become one of the most important open model releases for agentic and enterprise AI systems this year.

Sources

- DeepSeek on X: DeepSeek-V4 Preview is officially live and open-sourced — Official DeepSeek announcement: V4-Pro has 1.6T total / 49B active parameters; V4-Flash has 284B total / 13B active parameters; both support a one-million-token context window; ope

- DeepSeek-V4-Flash provider model card — Provider model card summarizing DeepSeek-V4-Flash as an efficiency-focused MoE model with 284B total parameters, 13B active parameters, and 1M context.

- Hugging Face blog: DeepSeek-V4 million-token context for agents — Technical commentary on DeepSeek-V4’s million-token context design, agentic workloads, inference FLOPs, and KV-cache scaling.

- DeepSeek V4 Preview Release | DeepSeek API Docs — 🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length.

- deepseek-ai/DeepSeek-V4-Pro · Hugging Face — We present a preview version of DeepSeek-V4 series, including two strong Mixture-of-Experts (MoE) language models — DeepSeek-V4-Pro with 1.6T parameters (49B activated) and