The most interesting AI-health story right now is not about a hospital rolling out another copilot.

It is about a founder treating his own survival like an engineering problem.

GitLab co-founder Sid Sijbrandij has been speaking publicly about how he used AI, dense documentation, aggressive diagnostics, and a self-assembled medical network after standard osteosarcoma treatment options were largely exhausted. The story is compelling for obvious reasons. It is also easy to overstate.

So the useful way to read it is not as “AI cured cancer.”

It is that AI appears to have helped one highly resourced patient run a much faster and more informed research-and-decision loop around a rare cancer.

That distinction matters.

What is actually documented

OpenAI’s March 2026 event page, From Terminal to Turnaround: How GitLab’s Co-Founder Leveraged ChatGPT in His Cancer Fight, says Sijbrandij used ChatGPT to help track and understand scans, blood tests, and tissue samples after nearly two years of exhausting what standard medicine could offer. OpenAI describes the result as a personal research and development loop that helped him move faster, ask better questions, and coordinate complex care.

That source is useful, but it is also a short event description.

The fuller public account comes from a January 2026 profile in The Century of Biology, which lays out the sequence in more detail:

- Sijbrandij was diagnosed with a rare osteosarcoma

- he went through surgery, radiation, chemotherapy, and other standard interventions

- the cancer later recurred

- because of the rarity of his case and his age, he reportedly did not fit typical trial criteria

- he then shifted into a much more aggressive self-directed approach built around maximal diagnostics, experimental options, and personalized therapeutic planning

That profile also attributes to him the line:

“It became my own job to keep myself alive.”

And it reports that his cancer is currently in remission.

That is the core story people are reacting to.

Why this resonates so hard

This story travels because it collapses several major 2026 anxieties into one narrative:

- standard systems run out of options

- rare conditions fall outside the median care pathway

- determined individuals try to build their own operating system around the problem

- AI becomes a force multiplier for research, synthesis, and coordination

That last part is the real reason this belongs on open-techstack.

The interesting claim is not that ChatGPT became an oncologist. It is that AI may have meaningfully increased one patient’s ability to:

- process a huge volume of medical information

- connect scattered findings faster

- coordinate with specialists and companies

- maintain continuity across diagnostics and treatment hypotheses

That is a very different thing from replacing doctors.

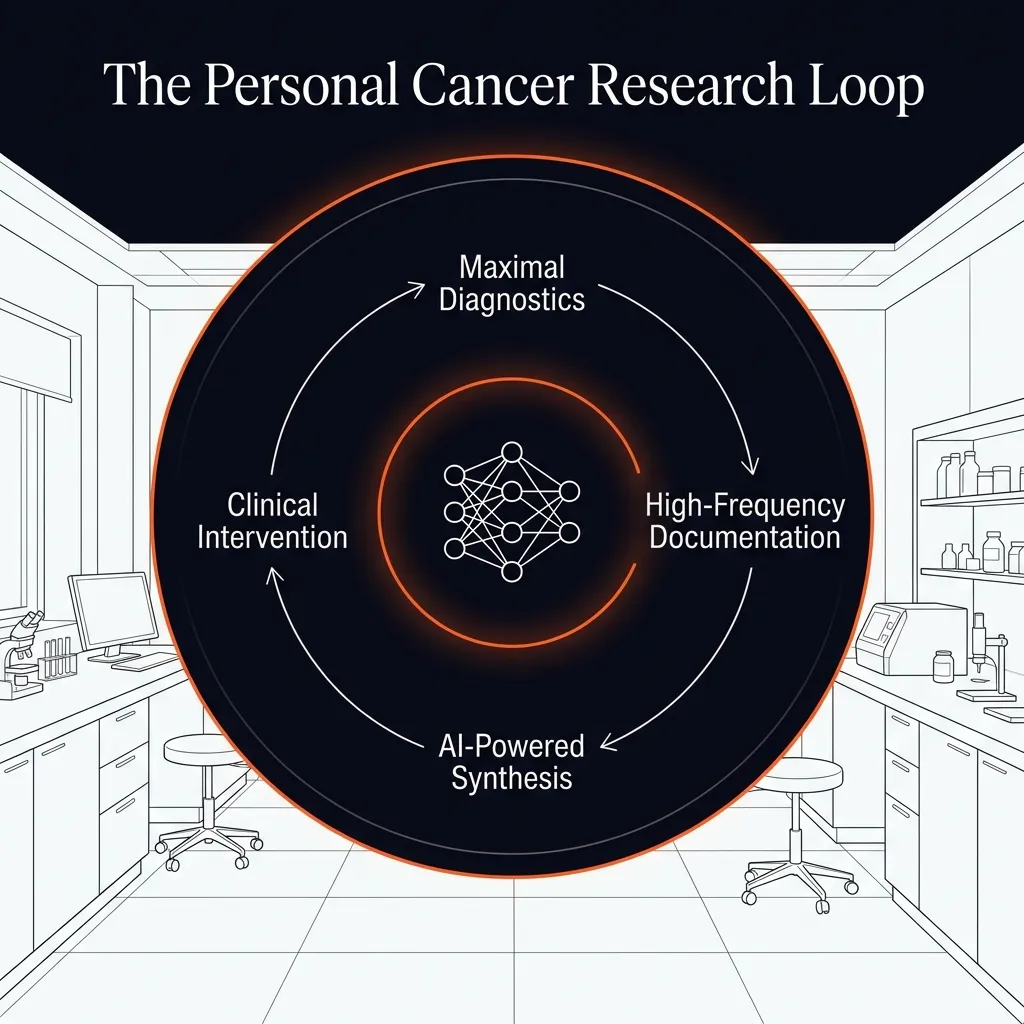

The real innovation here is the loop

The strongest part of this story is the operating model.

According to the public accounts, Sijbrandij’s approach had three important features:

- maximal diagnostics

- high-frequency documentation

- faster iteration across possible therapies

That is fundamentally a systems story.

The standard patient experience is often fragmented: one specialist, one visit, one report, one next step. By contrast, the care model described here looks much closer to a founder-style operating loop:

- gather as much signal as possible

- centralize the information

- recruit specialized experts

- build a ranked ladder of interventions

- update decisions continuously as new evidence comes in

AI fits naturally into that kind of loop because it is good at synthesis, pattern tracking, summarization, and helping humans navigate large information surfaces quickly.

This is one reason the story feels so significant. It suggests AI may be most useful in healthcare first as a coordination amplifier, not necessarily as an autonomous clinical brain.

What AI likely did well here

Based on the sources, the plausible role for AI is:

- organizing test results

- summarizing complex medical information

- preserving continuity across long timelines

- surfacing follow-up questions

- helping compare therapeutic options and diagnostics

That is already meaningful.

And it fits a broader pattern we keep seeing in real AI use cases: the highest-leverage applications are often not glamorous. They are the ones that help people manage complexity better and faster.

That connects directly to How to Build a Practical AI Workflow Without Wasting Money. The best AI workflow is rarely “ask one magic question.” It is usually a tighter loop of research, synthesis, iteration, and decision support.

What should not be overclaimed

This story is powerful enough that people are going to flatten it into the wrong headline.

The bad version is:

AI beat cancer.

That is not supported by the public evidence.

The more defensible version is:

AI helped one patient and his team run a more aggressive and informed personalized research process.

Even that should be read carefully.

There are obvious reasons this story does not generalize cleanly:

- Sijbrandij had unusual resources, access, and urgency

- rare-cancer care is highly specific

- remission is not the same thing as universal proof

- the underlying treatment decisions still depended on clinicians, diagnostics, and experimental options

And because this is health-related, the burden of caution is higher than usual. A compelling anecdote is not a protocol.

Why this still matters

Even with all those caveats, the story matters a lot.

It points to a real future category:

AI as a patient-side research and coordination layer for complex disease.

That is potentially enormous.

Not because patients should replace their medical teams, but because the information burden in serious illness is often absurdly high. If AI can help patients and clinicians navigate that burden faster and more coherently, it could become one of the most important practical uses of AI outside coding and knowledge work.

This also sits close to the issues in Why AI Hallucinates. In medicine, hallucination risk is not an abstract model flaw. It is a real hazard. That means the value of AI in this context depends not just on speed, but on careful human verification, disciplined sourcing, and clear limits.

Final verdict

The most important lesson in Sid Sijbrandij’s story is not that AI became a doctor.

It is that AI may have helped turn a desperate, fragmented medical search into a tighter and more navigable research loop.

That is a narrower claim.

It is also the more believable and useful one.

If this category grows, the breakthrough will not be “AI replaces medicine.” It will be that AI helps patients and clinicians process more evidence, coordinate more intelligently, and move faster when time matters most.

That is a serious use case.

It is also one that deserves more rigor than hype.